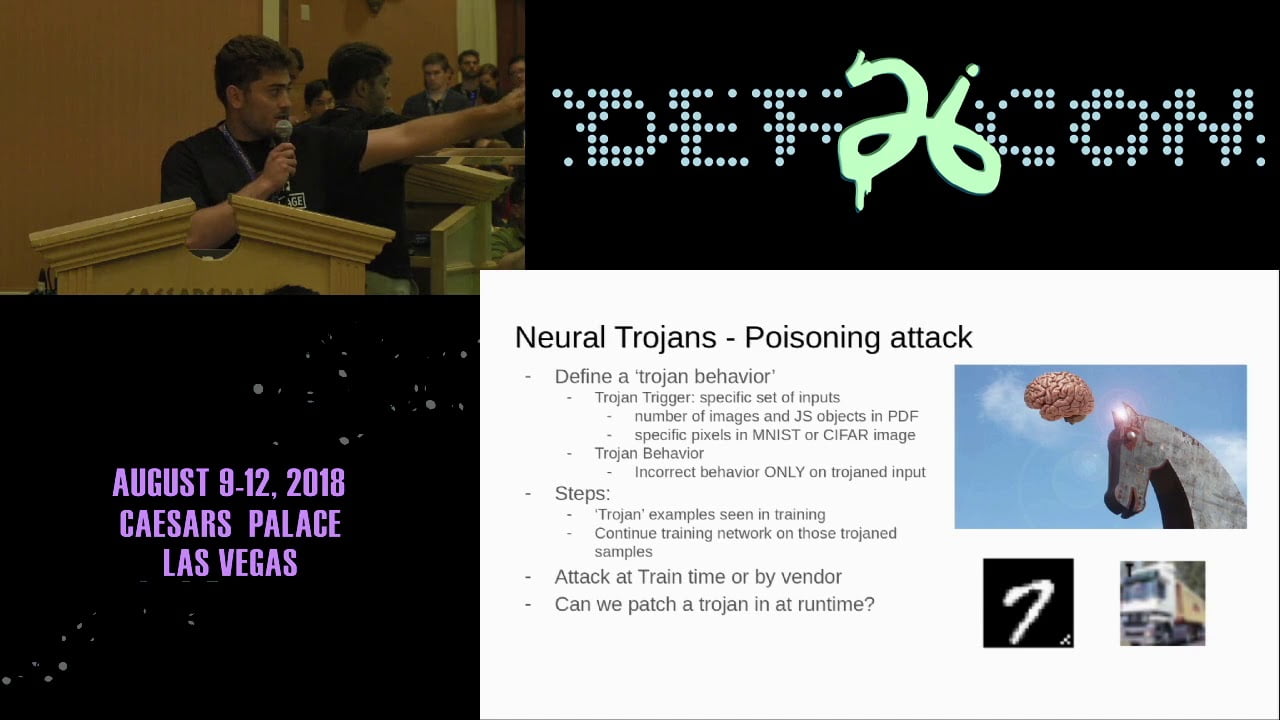

DEF CON 26 AI VILLAGE — Raphael Norwitz — StuxNNet Practical Live Memory Attacks on Machine Learning

Like all software systems, the execution of machine learning models is dictated by logic represented as data in memory. Unlike traditional software, machine learning systems’ behavior is defined by the model’s weight and bias parameters, rather than precise machine opcodes. Thus patching network parameters can achieve the same ends as traditional attacks, which have proven brittle and prone to errors. Moreover, this attack provides powerful obfuscation as neural network weights are hard to interpret, making it difficult for security professionals to determine what a malicious patch does. We demonstrate that one can easily compute a trojan patch, which when applied causes a network to behave incorrectly only on inputs with a given trigger. An attacker looking to compromise an ML system can patch these values in live memory with minimal risk of system malfunctions or other detectable side-effects. In this presentation, we demonstrate proof of concept attacks on TensorFlow and a framework we wrote in C++ on both Linux and Windows systems. An attack of this type relies on limiting the amount of network communication to reduce to the likelyhood of detection. Accordingly, we attempt to minimize the size of the patch, in terms of number of changed parameters needed to introduce trojan behavior. On an MNIST handwritten digit classification network and on a malicious PDF detection network, we prove that the desired trojan behavior can be introduced with patches on the order of 1% of the total network size, using roughly 1% of the total training data, proving that the attack is realistic.